When you hear that a huge percentage of electricity comes from renewables, what comes to mind?

Most people I ask in non-hydroelectric states assume that local solar and wind are being integrated into the grid in large quantities, and that it’s a hop, skip and a jump to twice that much. They also assume that solar and wind produce 0 greenhouse gas (GHG) emissions.

An article based on a recent California Public Utility Commission Report helps create the large quantity kind of thinking:

Two years ago, Gov. Jerry Brown signed an ambitious law ordering California utility companies to get 50 percent of their electricity from renewable sources by 2030.

It looks like they may hit that goal a decade ahead of schedule.

An annual report issued Monday by California regulators found that the state’s three big, investor-owned utilities — Pacific Gas and Electric Co., Southern California Edison and San Diego Gas & Electric Co. — are collectively on track to reach the 50 percent milestone by 2020, although individual companies could exceed the mark or fall just short of it.

In 2016, 32.9 percent of the electricity PG&E sold to its customers came from renewable sources, according to the report. Edison reached 28.2 percent renewable power in 2016, while SDG&E — the state’s smallest investor-owned utility — hit 43.2 percent.

Where does CA electricity come from?

In 2016, California generated 198,227 gigawatt hours (GWh, a billion Wh or a million kWh). California’s net imports (imports minus exports) was 92,341 GWh. Total consumption in 2016 was 290,568 GWh.

What percentage of current California consumption comes from in-state renewables?

| source | GWh produce | % of CA electricity | |

|---|---|---|---|

| 1 | Small Hydro | 4567 | 1.6% |

| 2 | Biomass | 5868 | 2% |

| 3 | Geothermal | 11582 | 4% |

| 4 | Wind | 13500 | 4.6% |

| 5 | Solar (concentrated solar)* | 2548 | 0.9% |

| 6 | Solar (panels)* | 17235 | 5.9% |

| 7 | Total Renewables | 55300 | 19.0% |

What is the difference between actual and reported numbers?

Of 42,378 GWH imported from the Pacific Northwest, 11,710 GWh is California built, mostly wind, but also biomass, small hydro, and a bit of geothermal. Of 49,963 GWh purchased from the Southwest, 3,791 GWh is CA solar sited out of state, along with 2,097 GWh in wind and 1,038 GWh geothermal.

It doesn’t make sense for CA to produce all its power in state. Traditionally, the Pacific Northwest supplies hydro in the winter and spring, for example, and California transmits power north in the summer. Southern California buys cheap coal, most of which is generated out of state to avoid polluting California. The coal contracts will expire, but California will continue to import electricity. (Resource shuffling, California shifting its purchase to gas, and another entity buying the coal, may mean the actual drop in carbon dioxide emissions less than if California built low GHG generation in state.)

But now California is building out of state in order to pad the bottom line for renewables, although these do not help with the California goal of figuring out how to better integrate solar and wind into the grid.

Does it matter that CA is not producing as much renewables as people assume?

Almost 1/3 of California renewable electricity is generated out of state.

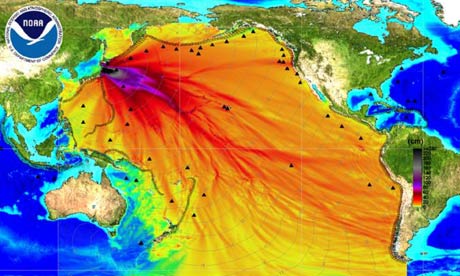

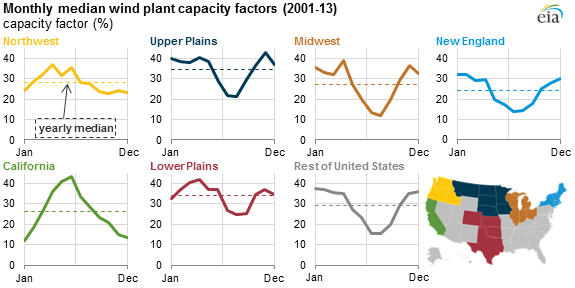

First, the goal appears to be renewables instead of decarbonization. Wind blows in the spring in the Pacific Northwest, competing with spring runoff during a low demand season. It blows other times of year, but peaks in the spring.

Some of this wind doesn’t help us address climate change. It does help CA meet renewables mandates.

Some of this wind doesn’t help us address climate change. It does help CA meet renewables mandates.

Many assume that California is showing how easy it is to get 1/3 or 1/2 of electricity from intermittent wind and solar. We’re definitely not there yet. Possibly, though, a premature feeling of success leads to less questioning of the chosen path.

Low levels of wind + solar still lead to integration problems.

Considerably less than 10% of California electricity comes from solar, yet for the first half of 2017, prices/kWh average below 2 cents between 10 AM and 4 PM. In March and April, there were at least 19 days where prices were negative, where solar would have stopped producing in the absence of a production tax credit (2.4 cent/kWh).

The low price doesn’t mean that consumers pay less, rather, that building more generation for those hours doesn’t make economic sense. California is building more solar.

Germany exports “typically half” of each solar (6%) + wind (12%) bump, demonstrating how hard it is to integrate into its grid.

Germany solar and exports, (figure 3)

Don’t all renewables have low GHG emissions? A positive, right?

How do wind and solar with gas backup compare to gas by itself? To nuclear and Washington hydro?

The Intergovernmental Panel on Climate Change estimates a median of 48 gram CO2-equivalent/kWh for building solar panels, over the complete life cycle. (Rooftop solar produces less electricity per panel than utility solar parks, so median GHG emissions are greater. Solar has higher emissions in areas with less sun, like Germany.) The median for wind is even lower, 11 g. (CO2-eq includes all emissions, not just carbon dioxide.) While the fuel may be free, solar and wind have a number of manufacturing steps. Silicon used for solar panels has to be purified at 1,100°C (2,000°F).

Compared to natural gas, 510 g (Table 1.06), solar and wind appear much better, but backing up with gas adds to that. Not only do clouds and wind come and go, requiring gas to follow, but there is a rapid ramp up of gas between 4 PM and 7 – 8 PM, when electricity demand peaks.

A Carnegie Mellon analysis finds that backing up solar and wind has associated GHG emissions of 21-24% of the backup gas. This is because gas plants, whether efficient or fast responding, run more inefficiently when they are ramped up and down to follow solar and wind, just as the fuel economy of cars falls when they change speed often. So 1 kWh of solar or wind in California has GHG associated with manufacture, somewhere around 11 or 48 g, plus another 105 to 120 grams from the backup, 115 to 165 gram/kWh. Still better than gas, about 1/4 to 1/3 as bad as gas.

While it’s difficult to directly measure, the extra emissions appear to have been observed. California Public Utility Commission found that between 2001 and 2016, the most efficient gas plants, which are not used primarily for backing up solar + wind, are now producing 5% more carbon dioxide (Draft 2017 IEPR last line page 103).

When there is little solar and wind, in the winter, California imports hydro from the Pacific Northwest, and uses gas.

By contrast, nuclear produces 12 g CO2-eq/kWh, according to IPCC. Hydro may be even lower.

It will take some work to integrate more solar and wind onto the grid

California will continue to add more renewables, so changes are needed. National Renewable Energy Laboratory (NREL) tackles how to increase California’s in-state level of solar power to 28% in Emerging Issues and Challenges in Integrating High Levels of Solar into the Electrical Generation and Transmission System. If all goes as planned, this will lead to 30% of solar being curtailed (tossed) compared to a bit under 2% today. Solutions include better forecasting, and changing demand response in a variety of ways (changing the time of day power is used, and how decisions are made). Energy storage (batteries) will also be needed, although batteries are energy intense (batteries made with coal power result in emissions of 100+ g CO2-eq/kWh, for the least energy intense battery), plus the emissions from power generation.

Perhaps the Northwest can send less hydro mid-day and more around 7 – 8 PM. Pumped hydro storage might work with wind, so that it becomes available later in the day.

Both battery storage and curtailing require extra solar + wind, with extra emissions (some 20% of electricity is lost in the charge discharge cycle), with higher emissions. However, GHG emissions of solar production should drop some.

Is this the path CA should be on?

It would be useful to see this question answered, comparing alternatives. California currently has chosen a higher GHG path than some to address knotty problems that come with double digit percentages of wind or solar. Progress is being made, although the popular idea that 30% of 2020 electricity will come from 0 GHG renewables is incorrect.